The Generative Edge - Week 2

Mixing your experts, creative outputs with Stable Diffusion LoRAs and OpenAI's GPT Store is open for business.

Welcome to week 2 of The Generative Edge. Here is the gist in 3 bullets:

Mistral AI introduces a Mixture of Experts approach to open models for more efficient language processing, using many specialized models for faster and smarter responses.

Generative image LoRAs offer highly customized image outputs and show how open image models can be tailored to your specific usecases.

OpenAI's GPT Store creates a new ecosystem for ChatGPT, allowing users to customize and integrate a variety of functionalities, from APIs to image generation.

For more the details, let’s hop right in!

Mixing Experts

Current language models are known for needing a lot of compute resources to generate their outputs. Since compute is both expensive and highly in-demand, there's been a push for smarter, more efficient solutions.

One of them that stands out is called Mixture of Experts (MoE).

Inference, meaning having the LLM generate text, can take a considerable amount of time and resources. The prompt is being passed through the various layers of the vast neural network. Is there maybe a better approach?

There is: MoE. Picture it (in the case of Mixtral) as a team of about eight smaller, specialized models instead of one giant, do-it-all LLM. Each model in this MoE team is really good at handling specific types of data/tasks. When a query comes in, a routing system assigns the task to the most suitable expert.

Mistral AI, a very promising French startup recently released their MoE model Mixtral

ChatGPT / GPT-4 are rumored to be MoE architecture as well

Other promising models like Microsoft’s Phi-2 are also being thrown into an expert mix (and they work really quite well)

Other innovations will follow MoE and we can expect stronger and more efficient models because of it.

The power of generative image LoRAs

Open image models like Stable Diffusion can be modified in many ways. We have talked about LoRA/lora (low rank adapter) before, something that is almost like a plugin which changes the output of Stable Diffusion in very distinct ways.

Installing and using a LoRA is a bit of a technical affair, but you can browse various models and loras on https://civit.ai. Fair warning: stray too far from the featured section at your own risk.

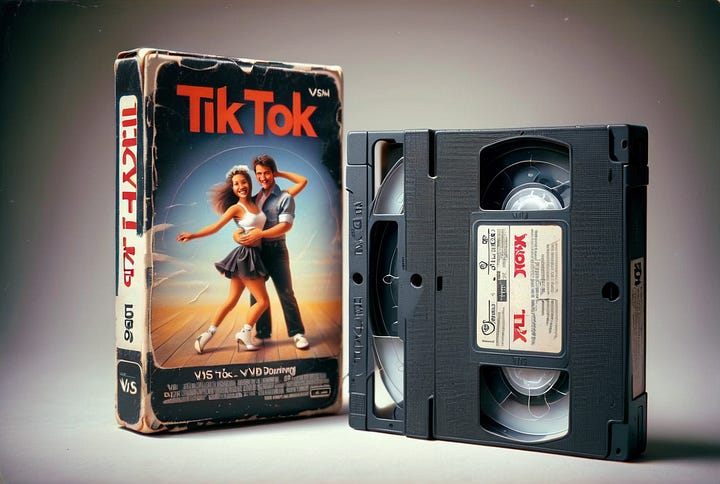

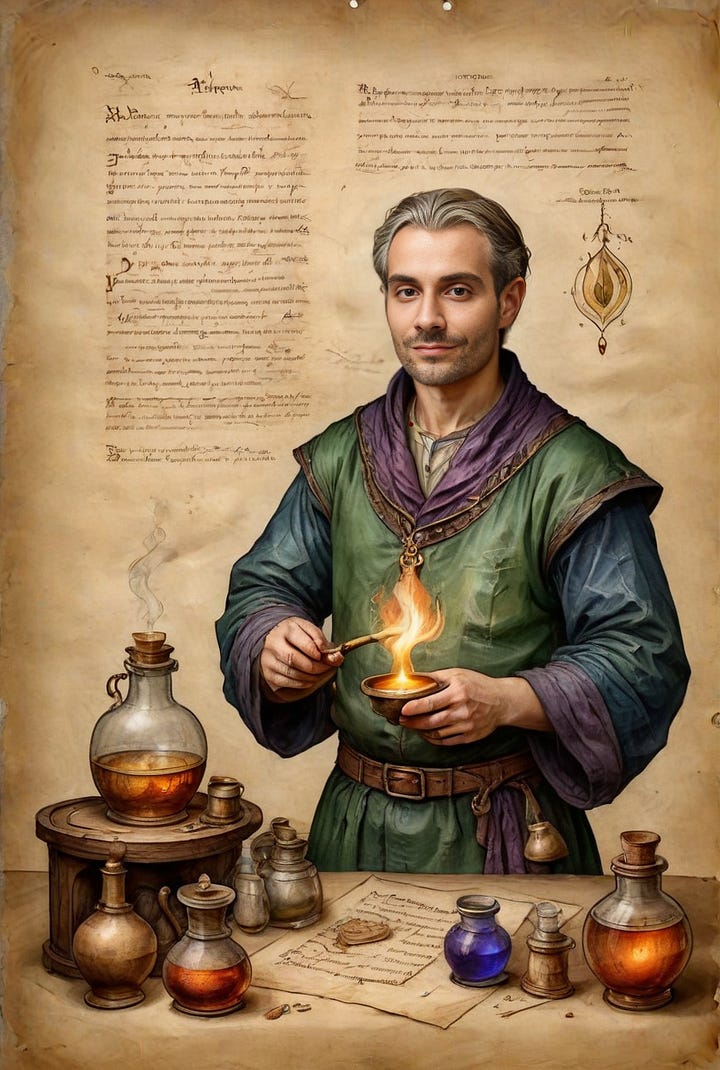

Loras exists for almost anything: creating VHS covers, Ikea manuals, fantasy characters on parchment and many, many more.

Open models are quite customizable in what and how they output their generations, which makes them quite powerful to integrate into pipelines to generate furniture, fashion, vehicles, styles, concepts, and more.

Midjourney, Dall-E & Co are powerful, easy to use tools, but when it comes to building custom pipelines that can slot into existing business processes, it’s hard to beat the flexibility of open models.

GPT Store

OpenAI is eager to further develop their moat and to turn their offering into a proper platform. In the past they’ve introduced plugins to integrate external services with ChatGPT.

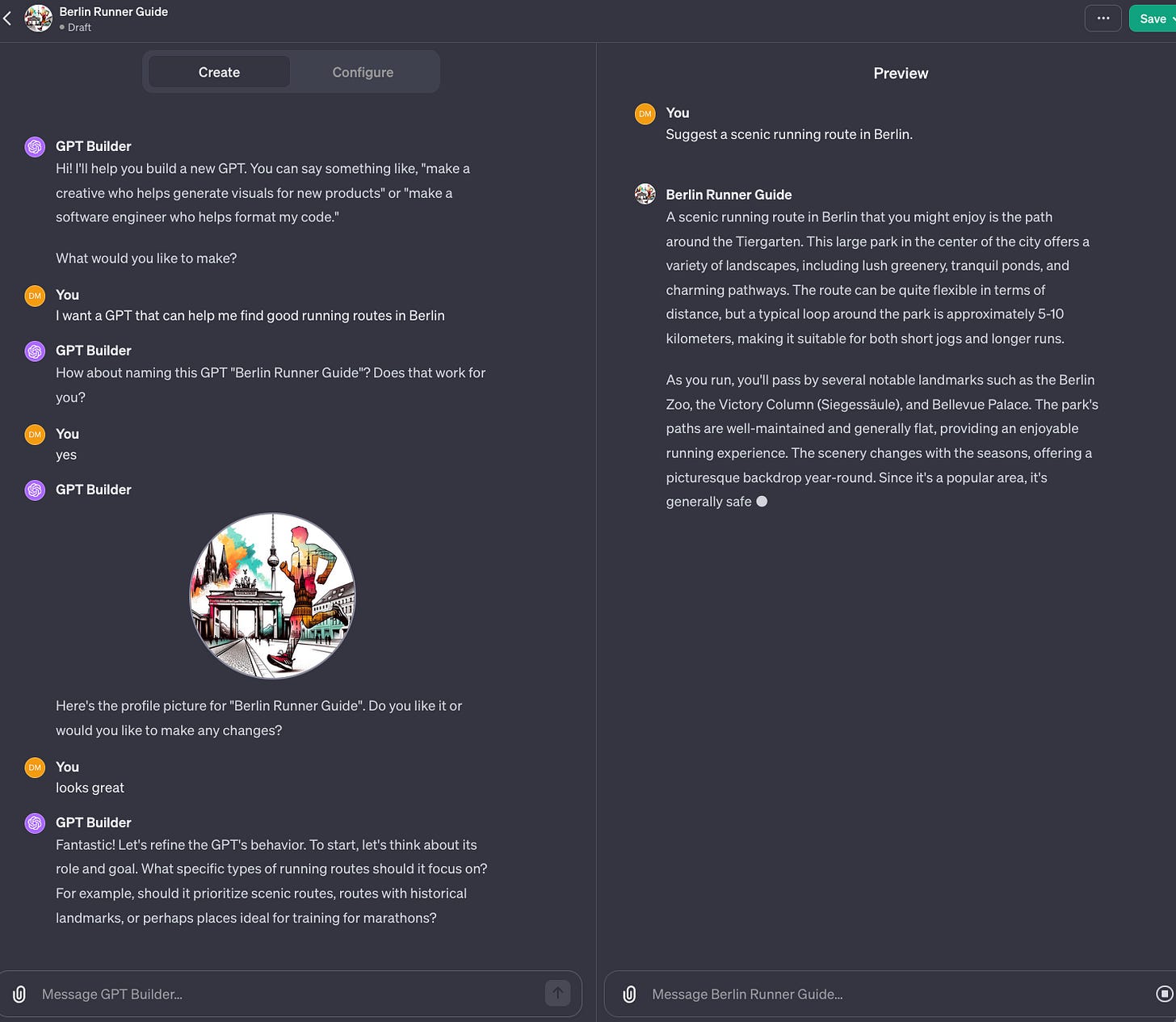

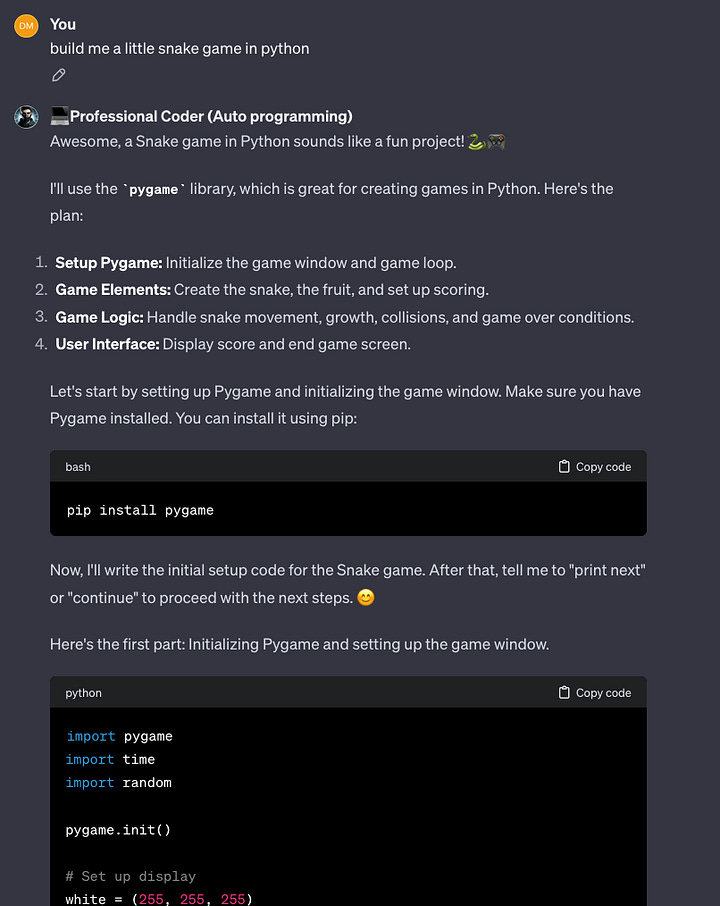

During last November’s dev days, OpenAI announced “GPTs”, the successor to plugins, and a new way to create customized ChatGPT usecases, that can include prompts, files, external APIs, websearch, image generation and code execution.

These GPTs could only be shared via direct links for the longest time, but last week OpenAI tackled the issue of discoverability and opened the GPT Store.

GPTs can be quite flexible in what they can do, from making travel plans, booking flights, helping you write, recommending you books and so on.

OpenAI plans to offer monetization options down the road

At this point, using and building GPTs requires a ChatGPT+ subscription

OpenAI continues to build out their platform, though some questions remain: how will lock-in and third party functionality will look like in the future - will your GPTs end up as core features in ChatGPT? Are we doing their work for them?

GPTs are not very transparent, as a user you don’t know what features, files and prompts are involved, which can create a bit of a trust problem.

All that said, custom GPTs are a great way to encapsulate functionality, interconnect with external services and to quickly prototype and share various ChatGPT usecases with others.

… and what else?

GPT Teams is available, OpenAI promises to not train your data, plus you get to create and share internal GPTs.

The GenAI Meetup in Berlin is happening Wednesday, 16.01.24. Check it out if you’re in town! https://www.meetup.com/berlin-practical-genai-meetup/events/297941071 - we are also giving a talk there and are sponsoring the venue.

And that’s it for this week!

Find all of our updates on our Substack at thegenerativeedge.substack.com, get in touch via our office hours if you want to talk professionally about Generative AI and visit our website at contiamo.com.

Have a wonderful week everyone!

Daniel

Partner and Generative AI lead at Contiamo